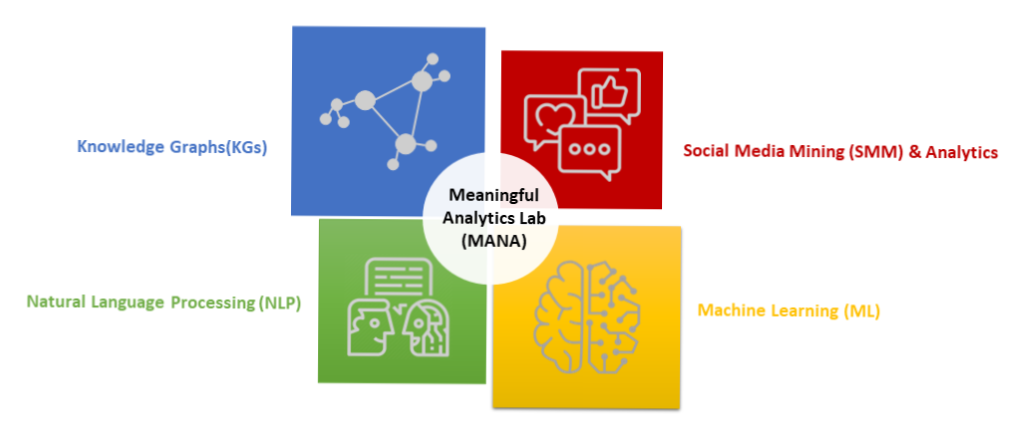

MANA – Meaningful Analytics Lab

The Meaningful Analytics Lab (MANA) is part of the Management Intelligence Services (MIS) research group. Meaningful stands for trustable, transparent, and reliable analytics which will unlock the true value of data in an organization. Our focus is on using the right data, algorithms and tools which will not only generate accurate results but will help domain experts understand the “how” and “why” behind the output. Furthermore, we conduct primarily applied research – our focus is on use cases that have high practical relevance especially in the healthcare and production domains.

The analytics methods and techniques used in our research are also thought in our lectures and seminars. For details, please refer to the description below.

Knowledge Graphs (KGs)

Data Science and Machine Learning, especially Deep Learning, have achieved unprecedented results over the last few years with many successful applications in various domains. However, recently, in both academia and industry an important disadvantage is being discussed, namely the inability to understand the reasoning behind the so called “black-box” models. Consequently, attention is turned to developing new methods or further improving existing methods that can help domain experts better understand the outcome of the machine learning model. This is part of a new AI branch known as Explainable AI (XAI).

Knowledge Graphs are a fairly non-technical approach, a modern implementation of symbolic AI, where symbols are elements of a lingua franca between humans and deep learning (Futia & Vetro, 2020). KGs are natively developed to be explainable and, thus, are a promising solution for the issue of understandability (ibid).

Currently, most explainable ML methods focus on interpreting the inner functioning of the black-box models, such as identifying and visualizing better the associations between the input features and the output. These models, however, are not able to account neither for context nor for the background knowledge that users may possess. KGs represent a domain-specific, machine-readable knowledge, and the connected datasets could serve as a background knowledge for the AI system to explain better its decisions to the users. (Tiddi & Schlobach, 2022)

Seminar: Advanced Business Analytics

Natural Language Processing (NLP)

IBM defines NLP as the branch of computer science and AI, concerned with giving computers the ability to understand text and spoken words in much the same way human beings can (IBM, 2022). The processing of natural language generally involves translating natural language into data (numbers) that a computer can use to learn about the world (Lane et al., 2019).

Major NLP tasks are:

- Named Entity Recognition – labels sequences of words in a text which are the names of things, such as person and company names, or gene and protein names. (Stanford NER)

- Sentiment analysis – this is part of text analytics that extracts subjective information from a text source, namely the polarity – positive, negative or neutral. It is also known as opinion mining or emotion AI. Common use cases are the analysis of customer feedback, survey responses, and product reviews.

- Part-of-speech (POS) tagging – Helps to understand what role each word plays in the sentence (e.g., noun, verb, adjective). Serves to determine, for instance, most important keywords in a sentence, to assist in searching for specific usages of a word (such as Will as a proper noun versus will the modal verb, as in “you will regret that”) (Ingersoll et al., 2013). It is used in NER, sentiment analysis, question answering, and word sense disambiguation (O’Reilly, 2022).

- Natural language generation – is the process of producing a natural language text to meet specified communicative goals. Generated texts can take be in the form of a single phrase, multi-sentence remarks or full-page explanations. (Dong et al., 2021)

There are different techniques developed for NLP. In the last few years, however, there have been two NLP models developed by Google and Open AI, both able to add a human touch to any kind of interaction with an artificial agent.

BERT (Bidirectional Encoder Representations from Transformers) was released by Google in 2018 and has 340 million parameters, while GPT-3 (Generative Pre-trained Transformer) has 175 billion parameters. In general, BERT requires fine-tuning, while GPT-3 doesn’t. However, a regular user would most likely run out of memory trying to run the GPT model. Both models are used for natural language inference, text classification or question answering.

Seminars: Social and Web Intelligence, Advanced Business Analytics

Social Media Mining (SMM) & Analytics

Social Media Mining is concerned with the extraction and analysis of user-generated content. We are spending an ever-increasing amount of time online. With our actions and interactions, we create knowingly and unknowingly data which are now called digital trace data. An example for data we knowingly create are Twitter or Facebook posts or pictures we share on Instagram or Pinterest. An example of data we unknowingly create are the so-called metadata, like for instance, the location of our Twitter post or the device we used for posting the message.

These data are an enormous and valuable resource when studying human behavior. It has many advantages, namely the (mostly) free access to these rich and genuine data, while important disadvantages are related to the preprocessing and storing of such data, but also in certain use cases there are ethical concerns – especially health related studies.

Methods for analyzing digital trace data rely mostly on NLP techniques (for processing text data), computer vision (for processing image data) and social network analysis (for analyzing interaction data, primarily based on the metadata).

Seminars: Social and Web Intelligence, Advanced Business Analytics

Machine Learning (ML)

Machine Learning is an AI branch that is focused on training programs to make decisions (e.g., predictions) or identify patterns in large datasets. There are generally three types of ML: supervised – for tasks such as classification or regression; unsupervised – for pattern recognition; or reinforcement learning – instead of learning by using training data, as in supervised learning, the model learns by trial and error.

There are important trends currently in the field of ML that will make it even more accessible to practitioners that do not necessarily have deep knowledge or experience in mathematics, statistics or programming; and it will simplify the data analytics process for experienced data scientists. One such trend is AutoML which provides ready to use templates and kick-start of the whole data science process. Developers and data scientists can quickly gain an initial impression of which algorithms would work best with their dataset, and which features are the most relevant. Another trend similar to AutoML and No-Code ML. It uses a drag and drop interface and simplifies the whole ML pipeline, making the process quicker and less costly.

Aside from trends focused on making the process simpler, there is another trend which concerns the data used for building the models. This is called synthetic data. These are data created by simulations or algorithms and can be as good as data coming from the real world. Major reasons contributing to this trend are privacy and the general lack of data in certain organizations. Thus, synthetic AI is set to be even more important in light of increasing relevance of AI ethical discussions.

Lecture: Business Intelligence

References

Dong et al., 2021. https://arxiv.org/abs/2112.11739

Futia & Vetro, 2020. https://www.mdpi.com/2078-2489/11/2/122/htm

IBM, 2022. https://www.ibm.com/cloud/learn/natural-language-processing

Ingersoll et al., 2013. Taming Text.

Lane et al., 2019. Natural Language Processing in Action. Manning Publications.

O’Reilly, 2022. https://www.oreilly.com/library/view/hands-on-natural-language/9781789139495/d522f254-5b56-4e3b-88f2-6fcf8f827816.xhtml

Stanford NER, 2022. https://nlp.stanford.edu/software/CRF-NER.html

Tiddi & Schlobach, 2022. https://www.sciencedirect.com/science/article/pii/S0004370221001788#!